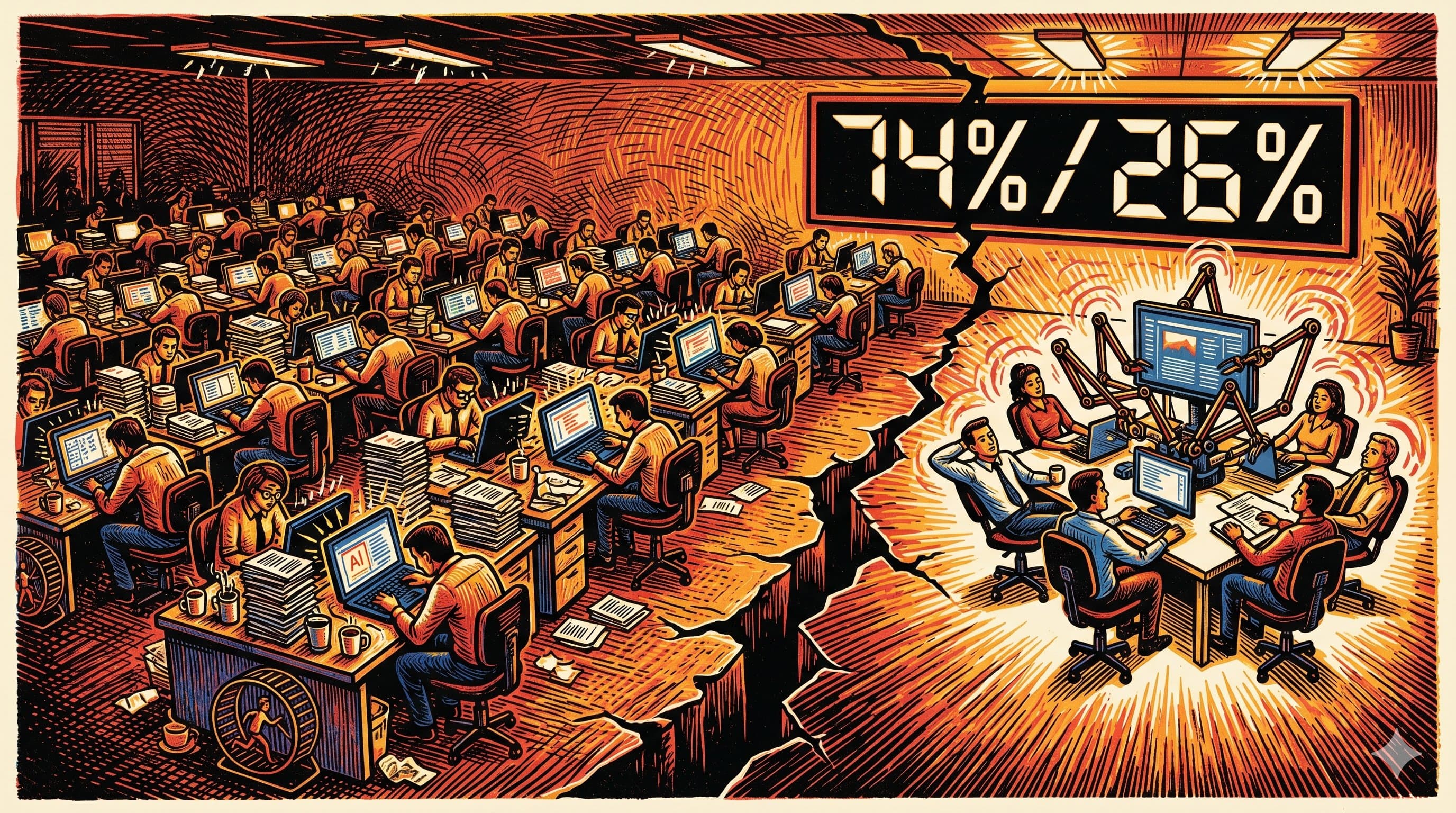

PwC surveyed 1,217 senior executives across 25 sectors and found that 20 per cent of companies capture 74 per cent of all AI-driven economic value. The remaining 80 per cent split the leftovers.

That is not a bell curve. That is a cliff.

The top performers are not spending more on AI. They are not running more pilots. They are not using fancier models. According to PwC's 2026 AI Performance Study, the leading companies generate 7.2 times more AI-driven revenue and efficiency gains than the average. The difference is not budget. It is behaviour.

If this sounds like it only matters to Fortune 500 executives making procurement decisions, keep reading. Because the same 80/20 dynamic plays out at the individual level, and the data on that is even more uncomfortable.

Most organisations are spending money to feel busy

Here is the uncomfortable truth buried in PwC's numbers: 56 per cent of CEOs, surveyed separately in PwC's 29th Global CEO Survey of 4,454 chief executives, report that AI has delivered neither increased revenue nor lower costs over the past twelve months. More than half. Spending real money. Getting nothing measurable back.

The AI Performance Study paints the same picture from a different angle. The majority of companies are deploying AI. They have the subscriptions. They have the enterprise licences. They have the pilot programmes. What they do not have is financial returns.

PwC calls this the gap between AI "activity" and AI "fitness." Activity is buying tools, running demos, and writing strategy documents with the word "transformation" in the title. Fitness is actually reorganising work around what AI can do.

The divide is not about how much AI these companies deploy, but about what they point AI at.

The top 20 per cent are not optimising spreadsheets faster. They are using AI to enter new markets, build new products, and pursue revenue opportunities that emerge when industry boundaries dissolve. PwC found that industry convergence, using AI to expand beyond your traditional sector, is the single strongest predictor of AI-driven financial performance. Stronger than efficiency. Stronger than cost reduction. Stronger than how many tools you have in your stack.

The same gap exists between individual workers

The enterprise data mirrors what is happening at desks and laptops everywhere.

ActivTrak's 2026 State of the Workplace report, drawing on 443 million hours of workplace activity across 163,638 employees, found that 57 per cent of AI users spend less than 1 per cent of their total work time inside AI tools. Not 1 per cent of their AI time. One per cent of their entire working day.

That is not adoption. That is tourism.

The same study found that the productivity sweet spot sits at 7 to 10 per cent of working time spent in AI tools. Most people never get there. And the ones who do are not the ones with the most tools. ActivTrak's data shows a clear inversion point: employees using more than three AI tools see productivity decline, while those using three or fewer report improved efficiency.

The average organisation now runs seven or more AI tools. That is already past the point where adding tools hurts instead of helps.

So the picture looks like this: most companies have adopted AI, most workers have tried AI, and most of both are getting almost nothing from it. A small minority, roughly the same 20 per cent that PwC identified at the enterprise level, have figured out something the rest have not.

Why the 80 per cent are stuck

Three patterns explain why most AI investment produces activity without returns.

The pilot that never graduates. Companies run a proof of concept. It works. Then it sits there. A 2026 UC Today analysis found the term "pilot purgatory" describes most enterprise AI: used enough to feel like progress, never deeply enough to create results. In a pilot, humans quietly compensate for what the system lacks. They provide missing context, reconcile data, approve actions. The AI appears to work because people are doing the hard parts around it. When you try to scale it without those humans in the loop, the whole thing falls over.

The tool sprawl trap. Every new AI capability arrives as a new subscription. A writing tool. A meeting summariser. A code assistant. An image generator. A data analyst. Each one solves a narrow problem. None of them talk to each other. The result is seven tools, seven logins, seven sets of context that the user must manually bridge. Gloria Mark's research at UC Irvine established that the average person takes 23 minutes and 15 seconds to refocus after switching contexts. If your AI "productivity stack" requires you to switch contexts five times an hour, the maths does not work in your favour.

Surface-level use driven by anxiety. HBR reported in February 2026 that 80 per cent of employees harbour significant concerns about AI's implications for their careers. That fear does not drive deeper adoption. It drives performative adoption: trying the tools enough to look competent, never integrating them deeply enough to change how you actually work. As the HBR research put it, AI initiatives stall because "employees' industry-shaped anxiety about relevance, identity, and job security drives surface-level use without real commitment."

What the 20 per cent do differently

The BCG-Harvard study (758 consultants, randomised controlled trial) found that people using GPT-4 completed 12.2 per cent more tasks, 25.1 per cent faster, and produced work that was 40 per cent higher quality. But only when the task fell within AI's capability frontier. When the task crossed that frontier, requiring analytical reasoning the model could not reliably perform, AI-assisted consultants were 19 per cent less likely to produce correct solutions than those working without AI.

The researchers called it the "jagged technological frontier." AI capability is not a smooth line. It is jagged, with pockets of brilliance next to pockets of failure. The professional skill is not "using AI." It is knowing where the jagged edge sits for your specific work.

That is the real differentiator in PwC's data. The top 20 per cent are not more enthusiastic about AI. They are more precise about it. Specifically, PwC found that leading companies are:

Nearly twice as likely (1.9x) to deploy AI in autonomous, self-optimising configurations rather than basic chatbot interactions. Nearly twice as likely (1.8x) to use AI executing multiple tasks within defined guardrails. And two to three times more likely to use AI to identify and pursue growth opportunities that emerge from industry convergence.

They have moved past "ask the chatbot a question" into "redesign the workflow around what AI can do."

Three mental models for the other 80 per cent

If you recognise your organisation, or yourself, in the 80 per cent, here are three frameworks that the data consistently supports.

Fewer tools, deeper integration. The ActivTrak data is unambiguous: three tools or fewer, used at 7 to 10 per cent of your working time, is the sweet spot. That means choosing one capable model and connecting it to your actual work context (email, documents, calendar, data, code) rather than maintaining a rotating cast of specialist apps that each see 2 per cent of your world. Model Context Protocol (MCP) makes this technically possible in 2026. The question is whether you will consolidate or keep collecting.

Point AI at growth, not just efficiency. PwC's single strongest finding is that the top performers use AI for revenue generation and new market entry, not just cost cutting. At the individual level, the equivalent is this: stop using AI to do the same work slightly faster. Start using it to do work you could not previously attempt. Write the analysis you did not have time for. Research the opportunity that was always too complex to scope manually. Build the prototype that was always "next quarter."

Learn the jagged frontier for your role. The BCG-Harvard study proves that the value of AI depends entirely on whether you deploy it within its capability boundary. That boundary is different for every role and shifts with every model update. The skill is not prompting. It is calibration: knowing which tasks to hand over completely, which to collaborate on, and which to keep entirely human. Nobody can teach you this for your specific job. You learn it by doing the work with AI, paying attention to where it helps and where it quietly makes things worse, and adjusting.

The 20 per cent are not AI enthusiasts. They are workflow designers who happen to use AI. The distinction matters.

The gap will widen before it closes

PwC's study is a snapshot, but the trajectory is clear. The companies generating 7.2 times the returns are reinvesting those returns into deeper AI integration, which produces further returns, which funds further integration. This is a compounding advantage. The same compounding works at the individual level: practitioners who have built effective AI workflows are now building on top of those workflows, while the majority are still trying to figure out which subscription to keep.

The consolation, if there is one, is that the barrier is not intelligence or budget. It is design. The 80 per cent are not failing because AI does not work. They are failing because they have not redesigned their work to let AI work.

That redesign is the leverage.

Sources

- PwC, "Global AI Performance Study 2026: Three-quarters of AI's economic gains are being captured by just 20% of companies," April 13, 2026. pwc.com

- PwC, "29th Annual Global CEO Survey," January 2026. pwc.com

- PwC, "Decoding ROI from AI: Want AI ROI? Go for growth," April 2026. pwc.com

- ActivTrak, "2026 State of the Workplace Report," Q1 2026. activtrak.com

- Dell'Acqua, F. et al., "Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality," Harvard Business School Working Paper 24-013 (2023), published in Organization Science, 2026.

- Mark, Gloria. Research on attention and context switching, UC Irvine. Findings: 23 minutes 15 seconds average refocus time after task interruption.

- Ferrazzi, Keith et al., "Why AI Adoption Stalls, According to Industry Data," Harvard Business Review, February 2026. hbr.org

- UC Today, "AI Pilot Purgatory: Why Enterprise AI Rollouts Fail to Scale," 2026. uctoday.com

- Stanford Institute for Human-Centered AI, "2026 AI Index Report," April 2026. hai.stanford.edu